Foreword

In this article, we will discuss the implementation of the Elman Network or Simple Recurrent Network (SRN) [1],[2] in WEKA. The implementation of Elman NN in WEKA is actually an extension to the already implemented Multilayer Perceptron (MLP) algorithm [3], so we first study MLP and it’s training algorithm, continuing with the study of Elman NN and its implementation in WEKA based on our previous article on extending WEKA [4].

1. Feedfordward Neural Networks: the Multilayer Perceptron (MLP)

In a feedforward network there is no feedback of the output of a neuron as input to another neuron in which it depends. For a given input all the required calculations in order to compute the network’s output take place in the same direction: from input to output neurons [5].

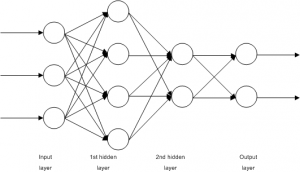

A Multilayer Perceptron (MLP) is a feedforward network in which the neurons are organized in layers. An example of an MLP network is shown in Figure 1. The main characteristic of this type of networks is that there are no connections between the neurons on the same layer. There is one input layer, one output and zero or more hidden layers. Usually all connections begin in a neuron on a layer and end to a neuron on the next layer. Finally, the nodes on the input layer do not perform any calculation, but they simply output their inputs. [5]

1.1. Training of MLP networks (error backpropagation algorithm)

The error backpropagation algorithm for the training of MLP networks was introduced at 1986 in a paper by Rumelhart, Hinton and Williams [6].

In this algorithm a set of training examples are presented to the network, where

is the input vector and

is the target output. The network computes its output

, where

is the input vector and

the vector containing all weights and biases of the network. [5]

We can now define the square of error function [5] as follows:

(1)

which is a sum of square errors per example and thus is bounded from below by 0, which occurs in case of perfect training. With

is represented the square error per example

, and so:

(2)

MLP’s training is implemented by updating the weight vector in order to minimize the square error

and it is actually an application of the gradient descent method which starts from an initial weight vector

, and for every repetition

computes the weight vector difference

in a way that it moves with small steps to the direction for which the function

has the greatest rate of decrease. [5]

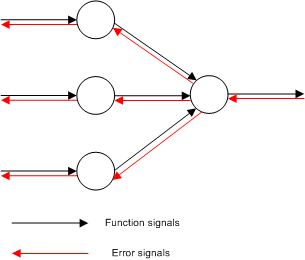

The training is a two-pass transmission through the layers of the networks: a forward propagation and a back propagation as it is depicted in Figure 2. [1]

During the forward pass, the output values for all network’s nodes are calculated and stored. These values are also called function signals, their directions are from input to output and at the end is produced a set of outputs which are the network’s output vector. This output vector is then compared with the target output vector

and the error signal is produced for the output neurons. That signal is propagated to the opposite direction and the errors for all hidden neurons are calculated. [5]

In order to describe the calculations that take place during the training of the MLP network, we need to define the symbols for the various values. So, we define [5] the -th neuron on the

-th layer as

and thus:

the total input of the

neuron.

the output of the

neuron.

the error of the

neuron.

the bias of the

neuron.

the activation function of neurons on the

-th layer.

the number of neurons on the

-th layer.

the weight of the connection between the neurons

and

.

The layers are numbered from the input to output layers and for the input layer . If

is the input vector, then for the input layer’s neurons is

. If

is the output layer, then the network’s output would be

.

Given an MLP network with inputs,

outputs and

hidden layers, the input layer is layer

and

, the output layer is layer

and

. So, the computation for the output vector

for an input

is as follows: [5]

Forward pass

Input layer

(3)

Hidden and output layers

(4)

(5)

Network’s output

(6)

At this point the error back propagation can be applied as follows: [5]

Error backpropagation

Error calculations for the output neurons

(7)

Backpropagation of the errors to the first hidden layer’s neurons

(8)

Calculation of the error’s partial derivatives of the weights

(9)

In case that is the square error per example, then, according to the equation (2), equation (8) can be written as:

(10)

and it is not necessary to store the values during the forward pass. This is true also for the output layer’s neurons. So, if their activation function is the logistic, then:

(11)

and if it is linear:

(12)

The above descriptions can be summarized to the following algorithm:

MLP training algorithm by minimizing the training error

- Intialize the weigth vector

with random values in

, the learning rate

, the repetitions counter (

) and the epochs counter (

).

- Let

the network’s weight vector in the begining of epoch

- Start of epoch

. Store the current values of the weight vector

- For

- Select the training example

and aplly the error backpropagation in order to compute the partial derivatives

- Update the weights

(13)

- Select the training example

- End of epoch

Termination check. If true, terminate.

- Start of epoch

. Go to step 2.

In equation 13 can be added an extra quantity as in equation 14 below:

(14)

This quantity is called momentum term and the constant momentum constant. Momentum introduces to the network a kind of memory related to the previous values of

. So we can achieve a stabilizing effect and prevent the phenomenon of oscillations around the value of the minimum error. [5]

2. Recurrent Neural Networks: the Elman Network

The error backpropagation algorithm can be used to solve a wide range of problems. Feedforward networks, however, can only achieve a static mapping of the input space to the output space. In order to be able to model dynamic systems such as the human’s brain, we need to develop a network that is able to store internal states. [7]

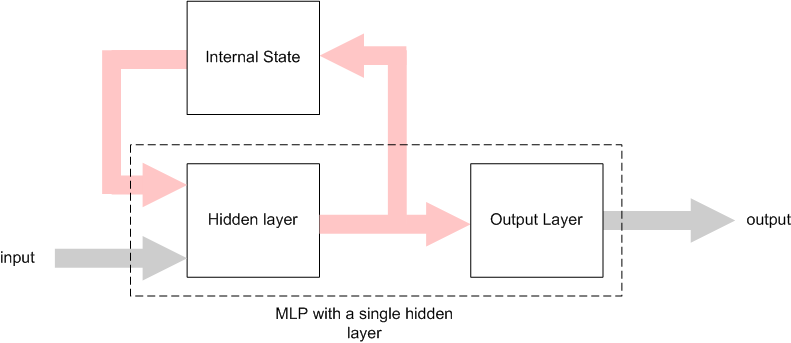

These types of networks are called Recurrent Neural Networks (RNN) and their main characteristic is the internal feedback or time-delayed connections. [7] An architecture of RNN is the Simple Recurrent Network (SRN) or Elman Network (Figure 3). [1] [8]

As we can see from the above figure, an Elman network is an MLP with a single hidden layer and in addirion it contains connections from the hidden layer’s neurons to the context units. The context units store the output values from the hidden neurons in a time unit and these values are fed as additional inputs to the hidden neurons in the next time unit. [1]

Assuming that we have a sequence of input vectors and a clock to synchronize the inputs, then it will take place the following process: The context units are initialized at 0.5. In a given time the first input vector is presented to the network and with the context units represent the input for the hidden nodes. Following that, the hidden nodes’ outputs are calculated and their values act as an input for the output nodes, and the network’s output is calculated. Finally the hidden nodes’ outputs are also stored to the context units and the procedure is repeated for time

. [8]

2.1. Training of Elman networks

During the training procedure of an Elman network, similar to the case of an MLP training, the network’s output is compared with the target output and the square error is used to update the network’s weights according to the error backpropagation algorithm [6] with the exception that the values of recurrent connections’ weights are constant to 1.0 [8].

If is the vector produced by the union of input and context vectors, then the training algorithm for an Elman network is very similar to the algorithm for an MLP network training:

Elman training algorithm

- Intialize the weigth vector

with random values in

, the learning rate

, the repetitions counter (

) and the epochs counter (

). Initialize the context nodes at 0.5.

- Let

the network’s weight vector in the begining of epoch

- Start of epoch

. Store the current values of the weight vector

- For

- Select the training example

and aplly the error backpropagation in order to compute the partial derivatives

- Update the weights

(13)

- Copy the hidden nodes’ values to the the context units.

- Select the training example

- End of epoch

Termination check. If true, terminate.

- Start of epoch

. Go to step 2.

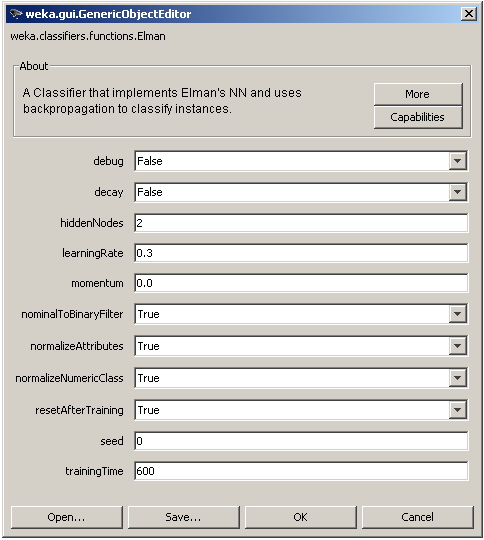

3. Implementation of Elman network in WEKA

Elman network implementation is based in the MLP algorithm (already existend in WEKA), which was sligthly modified in some parts like it’s general architecture and the initialization phase. The training algorithm remained the same with the addition of a step which copies the values of the hidden nodes to the context units.

The algorithm’s parameters that can be modified by the user, as shown in Figure 4. above, training parameters such as the learning rate (learningRate), the momentum constant

(momentum) and the number of training epochs

(trainingTime), architectural parameters such as the number of hidden nodes, an option to normalize the input vector (normalizeAttributes) and the output numeric value (normalizeNumericClass) and also an option to transform nominal input values to binary (nominalToBinaryFilter). The seed parameter is used to initialize a random number generator for the initial randomization of the network’s weights. Finally, the parameter resetAfterTraining defines if the internal state will be reseted or not after the training is complete.

4. Conclusions

In this article, we saw the differences between a common type of feedforwand network (MLP) and a recurrent network (Elman or SRN). We saw also how the elman neural network can be implemented in WEKA by modifying the code of the existing MLP network. The source code of the current Elman implementation for WEKA can be found at Elman.zip is not included as an official package in WEKA as it cannot be integrated well in WEKA due to the lack of support for classifiers that contain an internal state. On the other hand it can be used in custom java code and it is already been used with sucess in my own BSc Thesis [9].

References

[1] S. Haykin, “Neural Networks and Learning Machines”, 3rd Edition, Pearson Education, 2008.

[2] J. Elman, “Finding structure in time”, Cognitive Science, 1990, vol. 14, pp. 179-211.

[3] I. H. Witten, E. Frank, “Data Mining – Practical Machine Learning Tools and Techniques”, 2nd Edition, Elsevier, 2005.

[4] J. Salatas, “Extending Weka”, ICT Research Blog, August 2011.

[5] Α. Λύκας, “Τεχνητά Νευρωνικά Δίκτυα – Εφαρμογές”, Τεχνητή Νοημοσύνη Εφαρμογές, Τόμος Β, Ελληνικό Ανοικτό Πανεπιστήμιο, 2008.

[6] D. Rumelhart, G. Hinton, R. Williams. “Learning Internal Representations by Error Propagation”, Parallel Distributed Processing, MIT Press, 1986, pp.918-362.

[7] K. Doya, “Recurrent Networks: Learning Algorithms”, The Handbook of Brain Theory and Neural Networks, 2nd Edition, MIT Press, 2003. pp. 955-960.

[8] J. Elman, “Finding structure in time”, Cognitive Science, 1990, vol. 14, pp. 179-211.

[9] J. Salatas, “Implementation of Artificial Neural Networks and Applications in Foreign Exchange Time Series Analysis and Forecasting”, Hellenic Open University, May 2011.

This site is very useful to complete my dissertation work of MCA.

Hi,

I tried to install this package using Weka Package Manager, but it fails returning this message of error:

java.lang.Exception: Unable to find Description file in package archive!

Please, can you help me fixing this? Thanks

What package? There is no package for Elman. You need to merge the files with the original files of WEKA source code (just copy them and overwrite any existing java file with the new ones).

Keep in mind that the algorithm doesn’t work always as it should be through WEKA’s GUI but is ok for use in your own code.

Thanks for contacting me!

Thanks for the help, but I am a total Weka newbie 🙂

Can you be more specific? I found the .jar containing the source code, I added the files inside it, but then? What I have to do?

My turn to ask you to be more specific 🙂

Where did you find the .jar? What is the jar’s filename?

The jar file is weka-src.jar, inside the main Weka directory. I tried to follow the instructions here: http://weka.wikispaces.com/Netbeans+6.0+%28weka-src.jar%29 but they didn’t work. How did you re-compiled Weka?

Can you please try asking the same question to the WEKA’s mailing list [1]? After you have figured out how to compile WEKA’s source code ask me again about Elman if you are still having trouble. I will be glad to assist you.

[1] WEKA mailing list: https://list.scms.waikato.ac.nz/mailman/listinfo/wekalist

Hello,

Your site is very useful. Thank you!

Right now, I’m trying to make a recurrent Neural network and Elman’s algorithm is relating to my field of interest. I already downloaded the source code above and inserted them to the original weka source code. However, one error occurs because the package “weka.classifiers.AbstractClassifier” does not exist. I’m trying to create this package on my own but I have no idea what to write and how. I did search through many sites but got confused. Could you please help guiding how? I’m not quite good and JAVA, sort of newbie but I’m trying to learn.

Thank you in advance!

Hi!

It seems that there is something wrong with the WEKA’s source code you have downloaded. Can you please let me know what version of WEKA are you using and from where and how did yoy downloaded the source code?

Also please notice that the Elman algorithm was extensively tested in WEKA 3.7.2 and 3.7.3 and it *should* work in any newer version.

Regards,

John Salatas

Thank you for your reply.

The version I’m using is Weka 3.6.7. Since this is an older version, I assume it can’t work with the Elman code?

I actually downloaded this version from this site: http://sourceforge.net/projects/weka/files/weka-3-6-windows-jre-x64/3.6.7/weka-3-6-7jre-x64.exe/download.

When I accessed this site, I need to wait for about 5-10 seconds and the downloaded program started automatically.

If the older version matters, I’ll download the newer version Weka 3.7.6 and try working on it.

By the way, I have some more questions regarding how to run the program. Since I’m using Eclipse, can I just run the program as a java application right away? I’m concerned that the GUI might never appear and I won’t be able to choose Elman option that is edited into the original source code.

Thank you in advance.

Please try with version 3.7.2 or above. In case you try anything newer than 3.7.3, it would be great if you could provide your feedcback!

Regards.

Hi again,

I tried with Weka version 3.7.6. Already copied two codes of Elman algorithm and no error shows 🙂 However, I always use Weka as a GUI not a source code, so I have no idea how I can run the program and able to choose the Elman option. Since GUI never shows up, can you give some suggestions?

Do I need to use the command line in this case?

Right now I choose to run the program as a Java application and these lines show in the console..

Weka exception: No training file and no object input file given.

General options:

-h or -help

Output help information.

-synopsis or -info

Output synopsis for classifier (use in conjunction with -h)

-t

Sets training file.

-T

Sets test file. If missing, a cross-validation will be performed

on the training data.

-c

Sets index of class attribute (default: last).

-x

Sets number of folds for cross-validation (default: 10).

-no-cv

Do not perform any cross validation.

-split-percentage

Sets the percentage for the train/test set split, e.g., 66.

-preserve-order

Preserves the order in the percentage split.

-s

Sets random number seed for cross-validation or percentage split

(default: 1).

-m

Sets file with cost matrix.

-l

Sets model input file. In case the filename ends with ‘.xml’,

a PMML file is loaded or, if that fails, options are loaded

from the XML file.

-d

Sets model output file. In case the filename ends with ‘.xml’,

only the options are saved to the XML file, not the model.

-v

Outputs no statistics for training data.

-o

Outputs statistics only, not the classifier.

-i

Outputs detailed information-retrieval statistics for each class.

-k

Outputs information-theoretic statistics.

-classifications “weka.classifiers.evaluation.output.prediction.AbstractOutput + options”

Uses the specified class for generating the classification output.

E.g.: weka.classifiers.evaluation.output.prediction.PlainText

-p range

Outputs predictions for test instances (or the train instances if

no test instances provided and -no-cv is used), along with the

attributes in the specified range (and nothing else).

Use ‘-p 0’ if no attributes are desired.

Deprecated: use “-classifications …” instead.

-distribution

Outputs the distribution instead of only the prediction

in conjunction with the ‘-p’ option (only nominal classes).

Deprecated: use “-classifications …” instead.

-r

Only outputs cumulative margin distribution.

-xml filename | xml-string

Retrieves the options from the XML-data instead of the command line.

-threshold-file

The file to save the threshold data to.

The format is determined by the extensions, e.g., ‘.arff’ for ARFF

format or ‘.csv’ for CSV.

-threshold-label

The class label to determine the threshold data for

(default is the first label)

Options specific to weka.classifiers.functions.MultilayerPerceptron:

-L

Learning Rate for the backpropagation algorithm.

(Value should be between 0 – 1, Default = 0.3).

-M

Momentum Rate for the backpropagation algorithm.

(Value should be between 0 – 1, Default = 0.2).

-N

Number of epochs to train through.

(Default = 500).

-V

Percentage size of validation set to use to terminate

training (if this is non zero it can pre-empt num of epochs.

(Value should be between 0 – 100, Default = 0).

-S

The value used to seed the random number generator

(Value should be >= 0 and and a long, Default = 0).

-E

The consequetive number of errors allowed for validation

testing before the netwrok terminates.

(Value should be > 0, Default = 20).

-G

GUI will be opened.

(Use this to bring up a GUI).

-A

Autocreation of the network connections will NOT be done.

(This will be ignored if -G is NOT set)

-B

A NominalToBinary filter will NOT automatically be used.

(Set this to not use a NominalToBinary filter).

-H

The hidden layers to be created for the network.

(Value should be a list of comma separated Natural

numbers or the letters ‘a’ = (attribs + classes) / 2,

‘i’ = attribs, ‘o’ = classes, ‘t’ = attribs .+ classes)

for wildcard values, Default = a).

-C

Normalizing a numeric class will NOT be done.

(Set this to not normalize the class if it’s numeric).

-I

Normalizing the attributes will NOT be done.

(Set this to not normalize the attributes).

-R

Reseting the network will NOT be allowed.

(Set this to not allow the network to reset).

-D

Learning rate decay will occur.

(Set this to cause the learning rate to decay).

Thank you so much for your help!

Regards,

As I am explaining in the post, the algorithm isn’t working well through the WEKA’s GUI.

Therefore, I would say writing my own class and call the package consisting of Elman.java is a better alternative?

yes! 🙂

I’ am trying with version 3.7.10 and get the following error:

java.lang.Exception: Unable to find Description file in package archive!

at org.pentaho.packageManagement.DefaultPackageManager.getPackageArchiveInfo(Unknown Source)

at weka.core.WekaPackageManager.installPackageFromArchive(WekaPackageManager.java:1410)

at weka.gui.PackageManager$UnofficialInstallTask.doInBackground(PackageManager.java:741)

at weka.gui.PackageManager$UnofficialInstallTask.doInBackground(PackageManager.java:687)

at javax.swing.SwingWorker$1.call(Unknown Source)

at java.util.concurrent.FutureTask$Sync.innerRun(Unknown Source)

at java.util.concurrent.FutureTask.run(Unknown Source)

at javax.swing.SwingWorker.run(Unknown Source)

at java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source)

at java.lang.Thread.run(Unknown Source)

HELP!

It would be helpful if you could provide more details about it. For example, what did you do and you got this error? Is this reproducible?

Hi, nice work! In your opinion, can I use it to classify different classes described by several instances temporally ordered? If yes, I am thinking about the arff files for the input. I make an example. I have to classify to classes. These classes are defined by a series of attributes in temporal order (10 instances). The first attribute is the temporal order. The input will be an arff file similar to this:

1 attrX … attrN ‘class1’

2 attrX … attrN ‘class1’

…

10 attrX … attrN ‘class1’

1 attrX … attrN ‘class2’

2 attrX … attrN ‘class2’

…

10 attrX … attrN ‘class2’

1 attrX … attrN ‘class1’

2 attrX … attrN ‘class1’

…

10 attrX … attrN ‘class1’

Is it ok? How your code consider the temporal order?

Thanks.

It’s a great work. I try to implement the source code which you upload. But I found the ‘feedback’ in the setLink method is not recognized. Do I have to use the NeuralConnection class that included on the elman.zip? But when I use NeuralConnection class, I find problems in elman.java addNode function can not receive input for the type mismatch. Any suggestion? thank you very much for your kindness.

Yes, You need to replace all the classes in weka with the ones in the zip. I know this is really bad programming/practice and needs to be fixed some time 🙂

I tried to implement NeuralConnection in your zip file., but I got an error in the elman.java “NeuralConnection in elman.java cannot be applied to weka.classifiers.functions.neural.NeuralNode)” in the addNode method. Is my weka.jar version doesn’t match with elman.java or NeuralConnection.java? I’m sorry to trouble you, thank you very much, Sir.